AI agents are now autonomously fixing broken pipelines, enforcing data quality, and managing infrastructure — and the implications for enterprise data teams are significant.

Data engineering has long been one of the most human-intensive disciplines in enterprise technology. Pipelines break unpredictably. Schemas drift without warning. Upstream data sources change without notice. For years, the answer has been more engineers, better monitoring, and faster incident response, a cycle that scales poorly and burns people out.

In 2026, that model is being replaced. Agentic AI, a class of artificial intelligence systems that can pursue objectives autonomously, reason about complex problems, and take action without step-by-step human instruction, is now handling a meaningful share of the operational work that data teams once did manually. Organizations deploying these systems are reporting a 60% reduction in routine engineering toil, a fourfold improvement in mean time to resolution for data incidents, and a drop in pipeline failure rates as agents learn to catch and fix problems before they escalate.

This article examines what agentic AI actually is, how it is being applied across the data engineering lifecycle, what infrastructure it requires, and what governance considerations organizations need to have in place before deploying it.

What Makes Agentic AI Different from Conventional Automation

The term "agentic AI" is frequently used interchangeably with automation or even generative AI, but the distinction matters. Conventional automation follows predefined rules: if this happens, do that. Generative AI produces content — text, code, summaries — in response to prompts. Agentic AI does something categorically different: it sets goals, reasons about how to achieve them, takes action in real systems, observes the results, and adjusts its approach accordingly.

In a data engineering context, the practical difference looks like this. A traditional monitoring tool detects a pipeline failure and sends an alert to an engineer. An agentic system detects the same failure, diagnoses the root cause by reasoning over logs and historical incident data, executes a targeted fix, validates the result, and updates its decision model based on what it has learned, often resolving the issue entirely before any human is involved.

Modern AI agents are built from several coordinated components. A cognitive module handles reasoning and decision-making. A perception module collects and interprets inputs from logs, APIs, and upstream systems. A memory module retains context across incidents, building institutional knowledge over time. An action module executes decisions against live infrastructure. A learning module refines performance from experience. And a communication module escalates to humans when the situation genuinely warrants it.

The key characteristic that makes this different from previous automation approaches is the loop: perceive, reason, act, learn, repeat. It is this continuous cycle that allows agentic systems to handle novel situations, not just the scenarios their creators anticipated.

How Agents Are Being Deployed Across the Data Engineering Lifecycle

The most significant shift in enterprise deployments over the past year is the recognition that agentic AI works best when it is applied across the entire data engineering lifecycle, not bolted onto production monitoring as an afterthought. Organizations that have deployed what some are calling an AI agent marketplace, specialized agents working at each stage of the software development lifecycle, are seeing compounding benefits that point-solution deployments do not achieve.

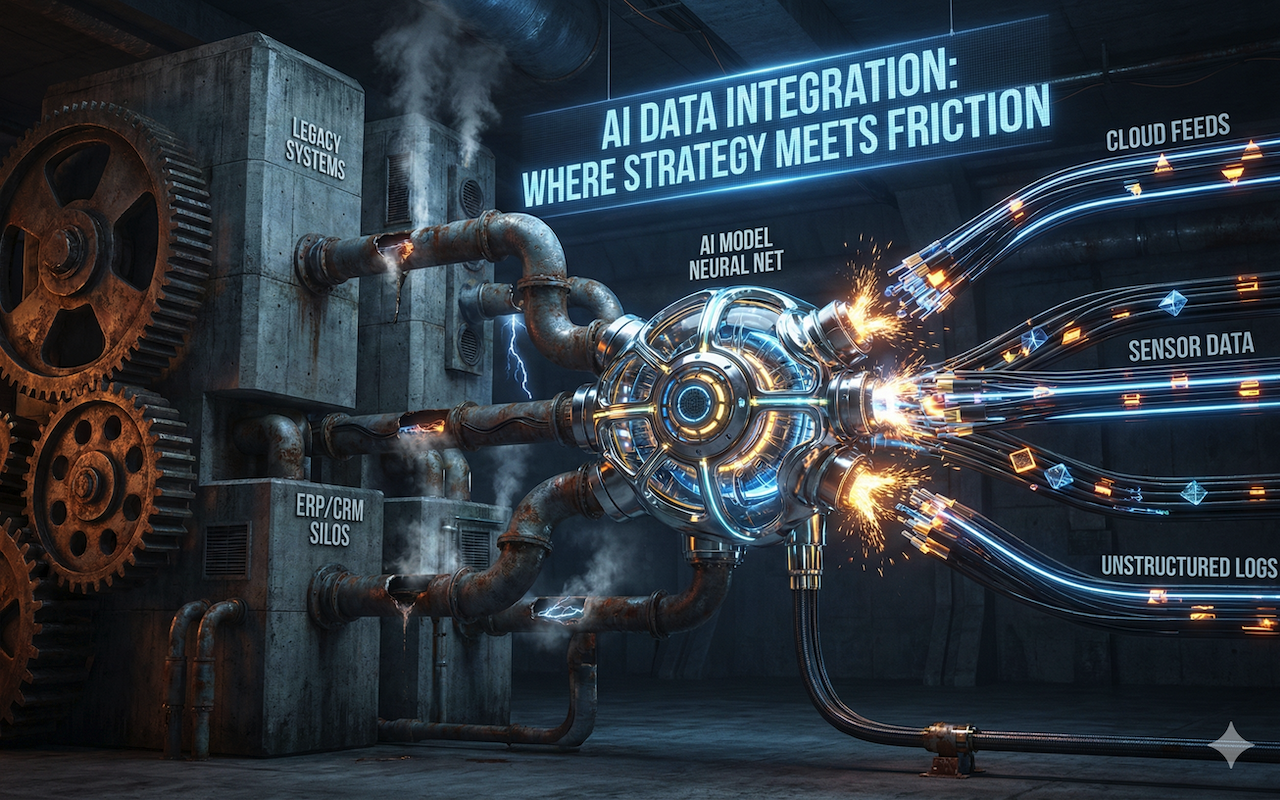

Image by Unsplash.

Requirements Validation

At the requirements stage, agents cross-reference new data requests against existing infrastructure capacity, compliance obligations, and feasibility constraints before any design work begins. A business request for five years of historical transaction records is automatically assessed for storage costs and GDPR implications. Conflicting data definitions between teams are flagged for clarification. The result is that data engineers receive requirements that are already validated as feasible and compliant, rather than discovering problems weeks into a build.

Design and Architecture

During the design phase — particularly for organizations migrating from legacy systems, which remains a dominant project type in 2026 — design optimizer agents analyze legacy metadata, infer undocumented schemas, and simulate how proposed ETL and ELT pipelines will perform under peak load. They identify resilience gaps and suboptimal resource allocations, and they propose specific architectural changes before implementation begins. This early-stage validation is preventing a class of expensive re-architecting projects that have historically consumed significant engineering time.

Build and Code Quality

Code standardizer agents embedded in CI/CD pipelines function as continuous quality guardians during the build phase. They detect suboptimal Spark operations, missing error handling, inadequate logging, and deviations from agreed coding standards. Importantly, they do not just flag problems; they open pull requests with specific refactoring suggestions. They also block merges when code fails critical quality checks, meaning that substandard code cannot enter the main branch without an explicit human override. Technical debt that would previously accumulate invisibly for months is now intercepted at the point of creation.

Testing

Test orchestrator agents autonomously schedule and run unit, integration, and end-to-end tests, scaling resources dynamically based on pipeline complexity. They validate performance against SLA targets, conduct stress tests to simulate burst traffic, and use machine learning to detect subtle data drift and quality anomalies in test data. Issues that would previously surface only in production and trigger the midnight incident cycle are now reliably caught in pre-deployment testing.

Production Operations

Auto-remediator agents represent the most operationally visible application of agentic AI in data engineering today. By continuously monitoring service logs, cross-referencing anomalies against known error databases, and executing self-healing actions such as schema corrections, data quarantine, infrastructure scaling, and job retries with adjusted parameters, these agents handle the majority of routine production incidents without human involvement. In leading deployments, agents with 18 months of operational memory resolve incident types they have seen before in under 2 minutes, compared to an average human response time of 40 minutes for the same categories.

The Cloud Infrastructure Required for Enterprise-Scale Deployment

Agentic AI at enterprise scale requires more than a large language model pointed at a log file. The infrastructure that supports production deployments in 2026 is a carefully layered stack connecting perception, memory, reasoning, and action into a coherent operating system for data.

At the knowledge and memory layer, retrieval-augmented generation pipelines, backed by vector databases, give agents access to organizational knowledge at query time, including data dictionaries, compliance rules, incident history, and supplier API documentation. This allows agents to make context-aware decisions rather than reasoning in a vacuum. Short-term cache memory handles in-session context; long-term semantic stores build institutional knowledge that persists across months of operation.

The cognitive layer, which is the agent's reasoning and decision-making infrastructure, coordinates multiple specialized agents simultaneously through multi-agent orchestration frameworks. AWS, Google Cloud, and Azure now offer mature tooling at this layer, including built-in responsible AI guardrails that can enforce constraints on agent behavior before actions are taken.

The action and execution layer carries out what the cognitive layer decides. It interfaces directly with data infrastructure, including compute clusters, storage systems, transformation engines, and schema registries. Every action generates a full lineage record, creating an unbroken audit trail from the triggering event to resolution that satisfies both internal governance requirements and the enterprise provisions of the EU AI Act.

A continuous learning infrastructure completes the stack. When an auto-remediation action fails, or when a human engineer overrides an agent's decision, that signal feeds back into the model, refining future judgment without requiring manual retraining cycles. The system compounds its effectiveness over time, which is why organizations that deployed early are now reporting substantially better outcomes than those starting in 2026.

Governance and Ethics: What Needs to Be in Place Before Deployment

As agentic systems take on greater decision-making authority over live data infrastructure, the governance requirements become correspondingly more serious. Several principles have moved from recommended practice to operational necessity over the past eighteen months.

Explainability is the most fundamental. Every significant agent decision must generate a reasoning log, a structured record of how the agent interpreted the situation and why it chose the action it took. This is not optional for organizations operating under GDPR, the EU AI Act, or most enterprise risk frameworks in 2026. When an agent quarantines a data batch or blocks a code deployment, auditors and engineers need to inspect the reasoning immediately, not reconstruct it after the fact.

Human-in-the-loop mechanisms must be defined explicitly before deployment, not added reactively after an agent makes a consequential error. Organizations need clear escalation criteria: which categories of decision require human approval, and at what confidence threshold does an agent escalate rather than act autonomously? These parameters need to be designed, tested, and adjusted based on operational experience.

Bias detection in the training data and historical incident databases that agents learn from is equally important. If past incidents were disproportionately caused by a particular class of upstream data source, an agent trained on that history may develop systematic blind spots or over-reactions. Periodic auditing of agent decision patterns against ground truth outcomes is becoming a standard part of agentic AI operations in mature deployments.

Finally, the transition for data engineering teams requires genuine investment. Engineers whose careers were built on writing transformation code and managing pipeline operations are being asked to shift to a qualitatively different role: defining agent objectives, evaluating agent performance, designing governance frameworks, and intervening when agents encounter situations outside their training. That transition does not happen automatically, and organizations that have invested in structured upskilling programs are reporting significantly smoother deployments and better long-term outcomes.

Where Is This Heading

Organizations that deployed agentic data engineering platforms in 2025 are compounding advantages that are becoming difficult for later movers to close quickly. The systems are more capable than they were a year ago, not because the underlying models have changed, but because they have accumulated operational experience that cannot be quickly transferred or replicated.

The next phase being discussed in enterprise architecture circles and hyperscaler roadmaps is autonomous data product development: agents that do not simply manage existing infrastructure but design, build, test, and deploy new data products end-to-end, based on business objectives stated in natural language. The technical foundations for this are largely in place. The remaining constraints are governance, organizational readiness, and the hard-earned trust that comes from watching agentic systems perform reliably over time.

For data and technology leaders, the question in 2026 is no longer whether agentic AI belongs in their data stack. It is how quickly they can build the infrastructure, governance, and team capabilities required to deploy it responsibly and how much ground they are comfortable conceding to organizations that started earlier.

Share this post

Leave a comment

All comments are moderated. Spammy and bot submitted comments are deleted. Please submit the comments that are helpful to others, and we'll approve your comments. A comment that includes outbound link will only be approved if the content is relevant to the topic, and has some value to our readers.

Comments (0)

No comment